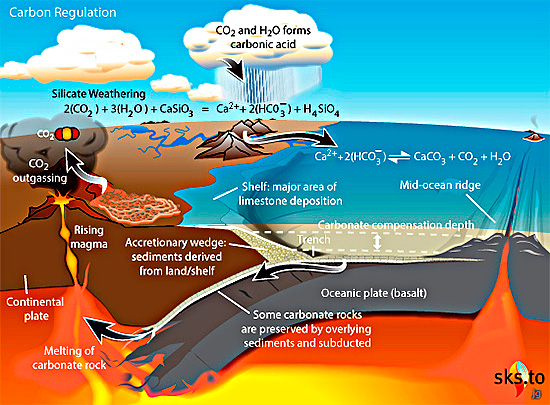

The notion of large-scale use of hydrogen as an energy source has a surprisingly long history. It was first proposed by J.B.S. Haldane in 1923, who envisaged electrolysis of water – releasing hydrogen and oxygen – using power from wind turbines to address this renewable source’s highly variable output effectively by storing it in the form of hydrogen. Since the only other output is oxygen, a hydrogen economy might seem to avoid global warming from the current release of greenhouse gases. However, as a 2023 post on Earth-logs concluded, of all the means for mass production and use of hydrogen only one source is a truly ‘green’ energy source: that emitted from rock by natural processes: so-called ‘white’ hydrogen. It is known to be generated by the breakdown of the mineral olivine [(Fe,Mg)2SiO4] by water in the absence of oxygen:

3Fe2SiO4 + 2H2O → 2 Fe3O4 + 3SiO2 +3H2

A more complex reaction is the hydration of olivine to the mineral serpentine [Mg3Si2O5(OH)4], which also yields hydrogen. Olivine is the most important mineral in the Earth’s mantle and abundant in crustal basalts and ultramafic rocks too. Oceanic lithosphere (ophiolites) added by tectonics to the continental crust form obvious targets for seeking natural hydrogen seepage. Yet such surface gas escapes have been documented only from a few sites, including an irrigation well in rural Mali that emitted gas containing 98% hydrogen, and a few natural springs from the Oman ophiolite.

The latest study may have taken the hydrogen economy to a literally deeper level (Sherwood Lollar, B. & Warr, O. 2026. Decadal record of continental H2 reservoirs reveals potential for subsurface microbial life and natural H2 exploration. Proceedings of the National Academy of Sciences, v. 123, article e2603895123; DOI: 10.1073/pnas.2603895123. PDF requests to owarr@uOttawa.ca and/or barbara.sherwoodlollar@utoronto.ca). Over fifteen years Barbara Sherwood Lollar and Oliver Warr of the Universities of Toronto and Ottawa, Canada monitored gas released by 35 boreholes originally drilled to assess and plan mining of an orebody in Precambrian basement rocks at Kidd Creek near Timmins, Ontario. On average, each of the boreholes released 8 kg of hydrogen per year. Scaled up to the mine’s 15 thousand exploratory boreholes, the mine itself is estimated to be yielding 140 metric tons of the gas annually. That could provide 4.7 gigawatts of energy per annum, sufficient for the needs of more than 400 Canadian homes.

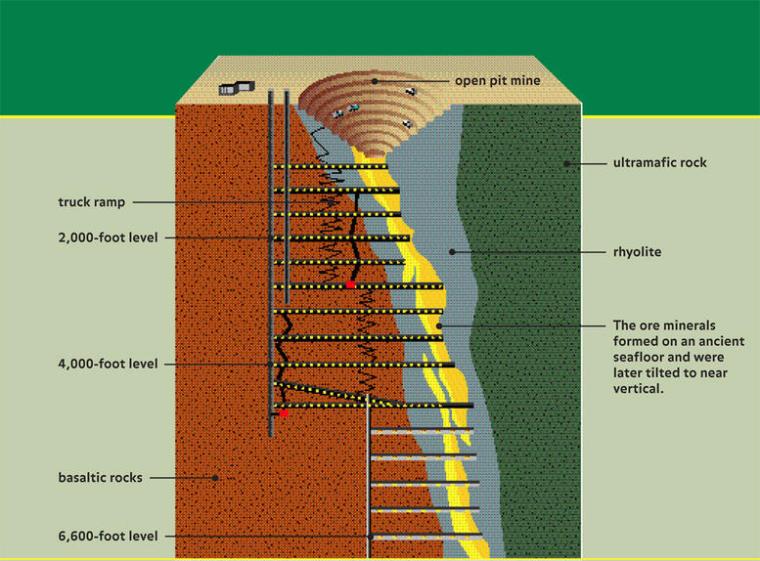

The Timmins mining district is typical of Archaean greenstone belts in the Canadian Shield and in cratons across the world: supracrustal rocks including ultramafic and mafic volcanics and a variety of metasedimentary rocks. The Timmins district is historically Canada’s largest gold producer, but also hosts ores of many other metals. The Kidd Creek Cu-Ag-Zn mine is one of the deepest in North America, which penetrates interlayered felsic, mafic, ultramafic, and metasedimentary rocks to a depth of 2.9 km below the surface. The ores formed by submarine hydrothermal processes around 2.7 Ga ago. The sampled boreholes were drilled horizontally at mine levels between 2.04 to 2.9 km below the surface to penetrate the ore zone and its mafic-ultramafic host rocks. Rather than yielding gas, the holes release briny fluids in which hydrogen, helium and various hydrocarbon gases are dissolved. They are similar to fluids issuing from other deep mines, but differ in showing their formation mainly to be through inorganic reactions with the bed rock rather than as a result of microbial metabolism that exploits a variety of chemical interactions in the ore, such as reduction of sulfate ions to sulfide. The authors have studied hydrogen yields from a number of other mines in mafic-ultramafic rocks, which are comparable with Kidd Creek. So it may be that hydrogen in vast volumes is being emitted by existing and abandoned metal mines in such igneous terrains.

Sherwood Lollar and Warr authoritatively outline the economic potential of hydrogen production for remote communities and mines in greenstone-belt terrains. They also assess active serpentinisation of ophiolites and kimberlites by near-surface groundwater and associated microbial ecosystems as hydrogen sources, the few that have been studied seeming to produce even larger amounts of hydrogen. But they also note that their closer proximity to the surface means that these geological features are generally ‘open-systems’ prone to rapid loss of gases. However, in the manner of hydrocarbon gas fields, some ophiolites may host large amounts of hydrogen if they are capped by younger clay-rich sedimentary strata. Whatever, the global warming of what might be called the ‘Hydrocarbon Age’ is set to become a disaster. Breaking its death grip should be the principal economic agenda, which requires the most rapid turn to long-term energy alternatives. Natural hydrogen could be a part of that, and hopefully the work of Sherwood Lollar and Warr, and others like them, should lead to determined exploration and assessment of this novel physical resource. In Scandinavia a Nordic Hydrogen Route is being proposed. This Swedish-Finnish initiative is based on the Scandinavian Shield and its greenstone terrains and numerous mines driven into them. One would hope that its entrepreneurs are considering naturally emitted hydrogen rather than or as well as sources given other coloured labels.

See also: Canada’s Billion-Year-Old Rocks Could Hold the Future of Clean Energy. Sci Tech Daily, 21 May 2026.