Elizabeth Pennisi comments on three comparative studies of the genetics of modern fish and terrestrial tetrapods in the latest online issue of Science News. Apparently some fish genes were, perhaps fortuitously, ‘multipurpose’. They may have been exploited during the Devonian colonisation of land to help evolution of limbs, lungs and aspects of the nervous system to adapt shallow-water fishes to climb out onto dry land. (Pennisi, E. 2021. Fish had the genes to adapt to life on land—while they were still swimming the seas. Science, News 10 February 2021; DOI: 10.1126/science.abg9265).

Author: zooks777

The ancestry of our opposable thumbs

Since the appearance of smart phones and the explosion of social media our thumbs have found a new niche; typing while holding a mobile. At a desktop keyboard, most of us don’t use thumbs very much, unless we have mastered fast touch typing, but for a huge variety of manual tasks thumbs are essential. The first makers of sophisticated stone tools must have been able to grip between fingers and thumb to manipulate the materials from which they were made and to perform the various stages in creating a razor sharp edge. To do that, as most of us are aware, the tip of the thumb must be capable of touching the tips of all four fingers; an opposable thumb is essential for the ‘precision grip’. Being able to tell when opposable thumbs evolve depends, of course, on finding hand-bone fossils. Being made of many bones disarticulated hands are a lot more fragile than long bones or those of the skull. Complete fossil hands are rare, as are feet, but a number have been found more or less complete. Whichever hominin had evolved opposable thumbs, their potential would have given them a considerable advantage over those that hadn’t.

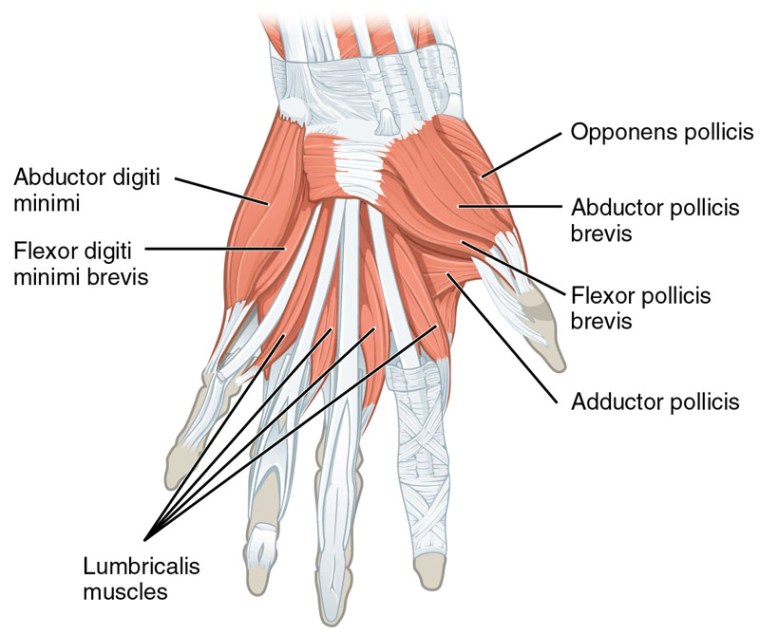

Simply comparing the shapes of fossilised bones of fingers and thumbs with those of modern humans and other living primates has, so far, not proved capable of resolving with certainty which hominin groups either did or did not have opposable thumbs. The key lies in the muscles that operate them. It has become commonplace to reconstruct faces and even whole bodies from fairly complete skeletal remains by modelling musculature from the positioning and shape of the points of attachment of muscles to bone. But that become increasingly difficult for the small-scale and intricate attachments in hands. The critical muscle for opposable thumbs is known as the Opponens pollicis (the Latin for thumb is Digitus pollex); a small triangular muscle that operates in conjunction with three others (with pollicis in their Latin names).

Fotios Karakostis and six colleagues from German, Swiss and Greek universities have devised software that can model muscles in 3-D (F.A. Karakostis et al. 2021. Biomechanics of the human thumb and the evolution of dexterity. Current Biology, v.31, online; DOI: 10.1016/j.cub.2020.12.041). Based on the anatomy of human and chimpanzee hand muscles and the positions of their attachment to individual bones, they have been able to establish a series of parameters that clearly distinguish the morphological and probably functional characteristics of the thumbs of these living primates. Complete sets of thumb bones from four Neanderthal skeletons show that they were significantly, but only slightly, different from anatomically modern humans. Those from three species of Australopithecus (africanus, sediba and afarensis) lie between ours and chimps’, with significantly closer affinity to chimpanzees. It seems that australopithecines of whatever age were not equipped with opposable thumbs and were possible tool producers and users with the very limited capabilities of modern chimps; holding, pounding and poking. A single set of hominin thumb bones from about two million years ago that were found in the famous Swartkrans Cave in South Africa show just as close affinity in thumb opposability to humans as do Neanderthals. So at 2 Ma there was a hominin species sufficiently dextrous to make and use sophisticated tools. The problem is, the bones are not directly associated with others and have been ascribed by different authors either to H. habilis or Paranthropus robustus. Interestingly, this paranthropoid has also been suggested (controversially) to have been the first known hominin to use fire, and it also used digging sticks. No one has ever suggested that the genus Homo descended from a paranthropoid ancestor or vice versa; these massively jawed beings did coexist with early humans in East Africa for over a million years. The other hominin who left hands in the geological record was Homo naledi; a controversial species because it was found in a barely accessible cave chamber, and took a while to date. This context gave rise to the notions that it was the direct ancestor of humans and that it buried its dead in a special place. However, it turned out to be relative recent, at about 280 ka (see: Homo naledi: an anti-climax; May 2017). Homo naledi does seem to have had opposable thumbs, but there is no associated evidence to suggest either tool making or use.

Fascinating as the methodology outlined by Karakostis et al. is, their findings do not take early human capabilities very much further than what is already known. Tools were made and used as far back as 3.3 Ma ago, and we know that H. habilis was doing this by about 2.6 Ma; i.e. long before the first evidence for opposable thumbs, and who had them first is uncertain. What is clear is that sophisticated tools, such as the bifacial Acheulian artifacts whose manufacture demands great dexterity, only appeared after the potential for nimble dexterity (about 1.8 Ma). The same goes for the first migration out of Africa, at about the same time, which demanded resourcefulness that may have sprung from the ability to manipulate natural materials effectively and carefully

See also: Handwerk B. 2012. How dexterous thumbs may have helped shape evolution two million years ago. (Smithsonian Magazine, 28 January 2021); Bower, B. 2021. Humanlike thumb dexterity may date back as far as 2 million years ago. (Science News, 28 January 2021)

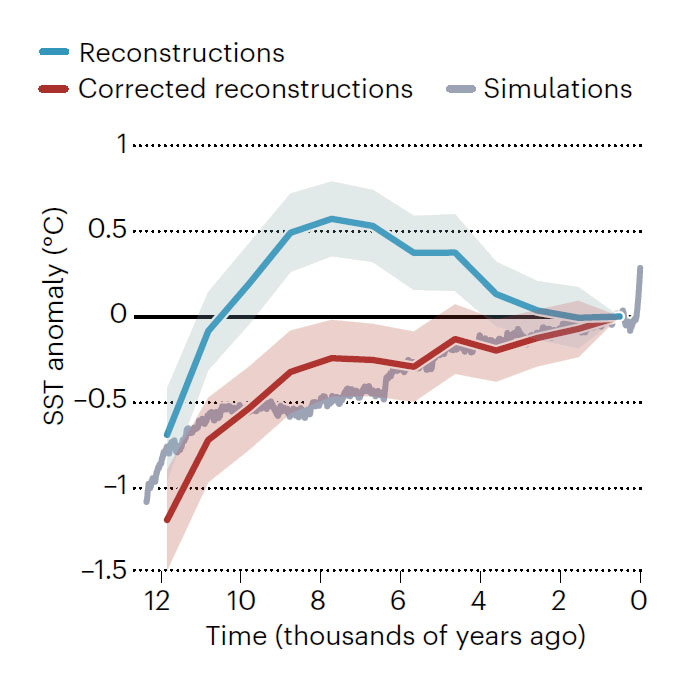

Global warming: an important revision

Part of the turmoil surrounding the issue of anthropogenic global warming hinges on whether or not observed changes in annual mean global temperature since the Industrial Revolution may be due to natural climatic cycles similar to those that operated previously during the Holocene Epoch. Actual measurements of temperatures of the air, sea surface and so on date only as far back at the early 18th century when thermometers were invented. Getting an idea of natural climate change through the 11.65 thousand years since the end of the last period of extensive glaciation depends on a variety of indirect measurements or proxies for temperature. For sea-surface temperature (SST) the proxy of choice is based on the way that surface-dwelling organisms, specifically planktic foraminifera, extract magnesium and calcium from sea water to construct their tests (shells). The warmer the sea surface the more magnesium is incorporated as a trace element into the calcium carbonate that forms their tests. The Mg/Ca ratio in planktic foram tests recovered from sea-floor sediment layers changes in a reliably precise fashion with warming and cooling. Following the Younger Dryas frigid millennium this proxy suggests that the average sea-surface temperature at mid-latitudes in the North Atlantic rose to a maximum of 0.5°C above the present value between 10 to 6 thousand years ago. After this Holocene Climate Optimum the sea surface seems to have cooled until very recently. Much the same pattern has been recorded in sediment cores from many parts of the world. Another approach is based on the varying amount of solar heating modelled by the Milankovich theory of astronomical climatic forcing and a variety of other forcing factors, such as albedo changes and the greenhouse effect. The two sets of data, one measured the other based on well-accepted simulations, do not agree; the modelling suggests a steady rise in SST throughout the Holocene and no climatic optimum. This conundrum either casts doubt on computer modelling of climate forcing, otherwise reliable on the broader time scale, or on some unsuspected aspect of the Mg/Ca palaeothermometer. The second could involve some kind of bias.

Samatha Bova of Rutgers University, USA, and colleagues from the US and China have examined the possibility of seasonal bias in estimates of SSTs from West Pacific ocean floor sediment cores off New Guinea (Bova, S. et al. 2021. Seasonal origin of the thermal maxima at the Holocene and the last interglacial. Nature, v. 589, p. 548–553; DOI: 10.1038/s41586-020-03155-x). First they examined the Mg/Ca proxy record from the last, Eemian interglacial episode (128-115 ka), on the grounds that astronomical modelling indicated much stronger seasonal contrasts in solar warming during that period, whereas other forcing factors were comparatively weak. By calculating the varying sensitivity of the older Mg/Ca record to seasonal factors they were able to devise a method of correcting such records for seasonal bias and apply it to the Holocene data from northeast New Guinea. The corrected Holocene SST record lacks the previously suspected climate optimum and its peak at ~8000 years ago. Instead, it reveals a continuous warming trend throughout the Holocene. The early part is far cooler than previously indicated by uncorrected SST thermometry. That may have resulted from the increased reflection of solar radiation – albedo forcing – from a larger area of remnant ice sheets on high-latitude parts of continents than was present during the warmer early-Eemian interglacial. Final melting of the great ice sheets of the Northern Hemisphere took until about 6500 years ago, when albedo effects would be roughly the same as at present. Thereafter, rising levels of atmospheric greenhouse gases warmed the planet towards modern levels.

Bova et al’s findings fundamentally change the context for modelling future climate change, and also for the interpretation of all previous interglacials, palaeotemperature records from which remain uncorrected. It seems likely that none of them had an early warm episode. As regards the future; climate modelling will have to change its parameters. For climate-change sceptics; two of their favourite arguments have been questioned. There are no longer signs of major, natural ups and downs in the early Holocene that might suggest that current warming is simply repeating such fluctuations. The other aspect of the Holocene climate conundrum, that greenhouse gases increased naturally since 6000 years ago while global mean SSTs declined, has been removed from the sceptics’ arguments

See also: Hertzberg, J. 2021. Palaeoclimate puzzle explained by seasonal variation. Nature, v. 589, p. 521-522; DOI: 10.1038/d41586-021-00115-x. Kiefer, P. 2021. Earth used to be cooler than we thought, which changes our math on global warming, Popular Science, 28 January 2021

How flowering plants may have regulated atmospheric oxygen

Ultimately, the source of free oxygen in the Earth System is photosynthesis, but that is the result of a chemical balance in the biosphere and hydrosphere that operates at the surface and just beneath it in sediments. Burial of dead organic carbon in sedimentary rocks allows free oxygen to accumulate whereas weathering and oxidation of that carbon, largely to CO2, tends to counteract oxygen build-up. The balance is reflected in the current proportion of 21% oxygen in the atmosphere. Yet in the past oxygen levels have been much higher. During the Carboniferous and Permian periods it rose dramatically to an all-time high of 35% in the late Permian (about 250 Ma ago). This is famously reflected in fossils of giant dragonflies and other insects from the later part of the Palaeozoic Era. Insects breathe passively by tiny tubes (trachea) through whose walls oxygen diffuses, unlike active-breathing quadrupeds that drive air into lung alveoli to dissolve O2 directly in blood. Insect size is thus limited by the oxygen content of air; to grow wing spans of up to 2 metres a modern dragon fly’s body would consist only of trachea with no room for gut; it would starve.

During the early Mesozoic oxygen fell rapidly to around 15% during the Triassic then rose through the Jurassic and Cretaceous Periods to about 30%, only to fall again to present levels during the Cenozoic Era. Incidentally, the mass extinction at the end of the Cretaceous (the K-Pg boundary event) was marked in the marine sedimentary record by unusually high amounts of charcoal. That is evidence for the Chixculub impact being accompanied by global wild fires that a high-oxygen atmosphere would have encouraged. The high oxygen levels of the Cretaceous marked the emergence of modern flowering plants – the angiosperms. Six British geoscientists have analysed the possible influence on the Earth System of this new and eventually dominant component of the terrestrial biosphere. (Belcher, C.M. et al. The rise of angiosperms strengthened fire feedbacks and improved the regulation of atmospheric oxygen. Nature Communications, v. 12, article 503; DOI 10.1038/s41467-020-20772-2)

The episodic occurrence of charcoal in sedimentary rocks bears witness to wildfires having affected terrestrial ecosystems since the decisive colonisation of the land by plants at the start of the Devonian 420 Ma ago. Fire and vegetation have since gone hand in hand, and the evolution of land plants has partly been through adaptations to burning. For instance the cones of some conifer species open only during wildfires to shed seeds following burning. Some angiosperm seeds, such as those of eucalyptus, germinate only after being subject to fire . The nature of wildfires varies according to particular ecosystems: needle-like foliage burns differently from angiosperm leaves; grassland fires differ from those in forests and so on. Massive fires on the Earth’s surface are not inevitable, however. Evidence for wildfires is absent during those times when the atmosphere’s oxygen content has dipped below an estimated 16%. The current oxygen level encourages fires in dry forest during drought, as those of Victoria in Australia and California in the US during 2020 amply demonstrated. It is possible that with oxygen above 25% dry forest would not regenerate without burning in the next dry season. Wet forest, as in Brazil and Indonesia, can burn under present conditions but only if set alight deliberately. Evidence of a global firestorm after the K-Pg extinction implies that tropical rain forest burns easily when oxygen is above 30%. So, how come the dominant flora of Earth’s huge tropical forests – the flowering angiosperms – evolved and hung on when conditions were ripe for them to burn on a massive scale?

Early angiosperms had small leaves suggesting small stature and growth in stands of open woodland [perhaps shrubberies] that favoured the fire protection of wetlands. ‘Weedy’ plants regenerate and reach maturity more quickly than do those species that are destined to produce tall trees. With endemic wildfires, tree-sized plants – e.g. the gymnosperms of the Mesozoic – cannot attain maturity by growing above the height of flames. Diminutive early angiosperms in a forest understory would probably outcompete their more ancient companions. Yet to become the mighty trees of later rain forests angiosperms must somehow have regulated atmospheric oxygen so that it declined well below the level where wet forest is ravaged by natural wild fires. The oldest evidence for angiosperm rain forest dates to 59 Ma, when perhaps more primitive tropical trees had been almost wiped-out by wildfires. Did angiosperms also encourage wildfires, that consumed oxygen on a massive scale, as well as evolving to resist their affects on plant growth? Claire Belcher et al. suggest that they did, through series of evolutionary steps. Key to their stabilising oxygen levels at around 21%, the authors allege, was angiosperms’ suppression of weathering of phosphorus from rocks and/or transfer of that major nutrient from the land to the oceans. On land nitrogen is the most important nutrient for biomass, whereas phosphorus is the limiting factor in the ocean. Its reduction by angiosperm dominance on land thereby reduces carbon burial in ocean sediments. In a very roundabout way, therefore, angiosperms control the key factor in allowing atmospheric build-up of oxygen; by encouraging mass burning and suppressing carbon burial. Today, about 84 percent of wildfires are started by anthropogenic activities. As yet we have little, if any, idea of how such disruption of the natural flora-fire system is going to affect future ecosystems. The ‘Pyrocene’ may be an outcome of the ‘Anthropocene’ …

The oldest known impact structure (?)

That large, rocky bodies in the Solar System were heavily bombarded by asteroidal debris at the end of the Hadean Eon (between 4.1 to 3.8 billion years ago) is apparent from the ancient cratering records that they still preserve and their matching with dating of impact-melt rocks on the Moon. Being a geologically dynamic planet, the Earth preserves no tangible, indisputable evidence for this Late Heavy Bombardment (LHB), and until quite recently could only be inferred to have been battered in this way. That it actually did happen emerged from a study of tungsten isotopes in early Archaean gneisses from Labrador, Canada (see: Tungsten and Archaean heavy bombardment, August 2002; and Did mantle chemistry change after the late heavy bombardment? September 2009). Because large impacts deliver such vast amounts of energy in little more than a second (see: Graveyard for asteroids and comets, Chapter 10 in Stepping Stones) they have powerful consequences for the Earth System, as witness the Chicxulub impact off the Yucatán Peninsula of Mexico that resulted in a mass extinction at the end of the Cretaceous Period. That seemingly unique coincidence of a large impact with devastation of Earth’s ecosystems seems likely to have resulted from the geology beneath the impact; dominated by thick evaporite beds of calcium sulfate whose extreme heating would have released vast amounts of SO2 to the atmosphere. Its fall-out as acid rain would have dramatically affected marine organisms with carbonate shells. Impacts on land would tend to expend most of their energy throughout the lithosphere, resulting in partial melting of the crust or the upper mantle in the case of the largest such events.

The further back in time, the greater the difficulty in recognising visible signs of impacts because of erosion or later deformation of the lithosphere. With a single, possible exception, every known terrestrial crater or structure that may plausibly be explained by impact is younger than 2.5 billion years; i.e. they are post-Archaean. Yet rocky bodies in the Solar System reveal that after the LHB the frequency and magnitude of impacts steadily decreased from high levels during the Archaean; there must have been impacts on Earth during that Eon and some may have been extremely large. In the least deformed Archaean sedimentary sequences there is indirect evidence that they did occur, in the form of spherules that represent droplets of silicate melts (see: Evidence builds for major impacts in Early Archaean; August 2002, and Impacts in the early Archaean; April 2014), some of which contain unearthly proportions of different chromium isotopes (see: Chromium isotopes and Archaean impacts; March 2003). As regards the search for very ancient impacts, rocks of Archaean age form a very small proportion of the Earth’s continental surface, the bulk having been buried by younger rocks. Of those that we can examine most have been subject to immense deformation, often repeatedly during later times.

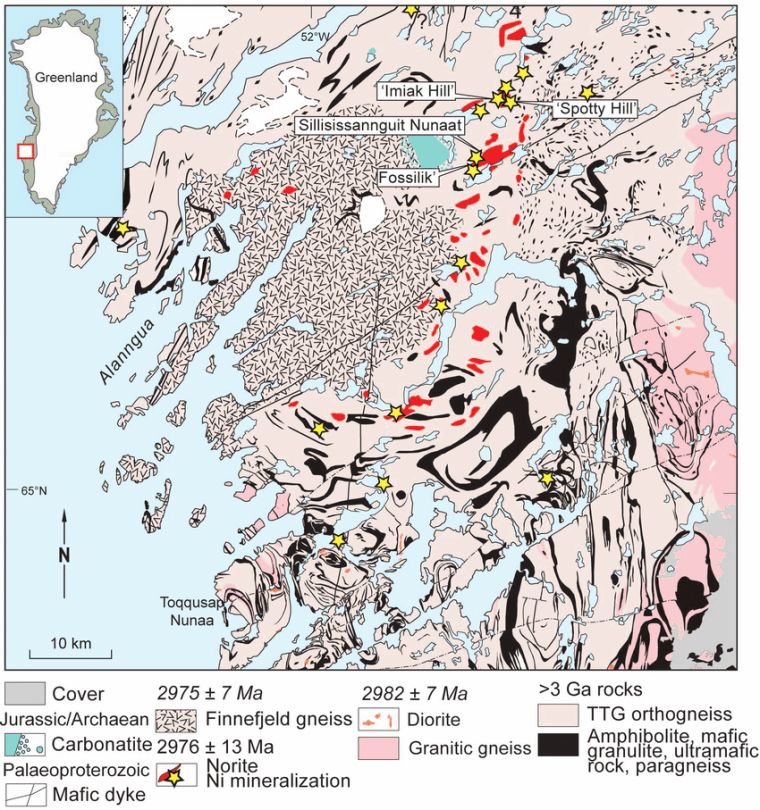

There is, however, one possibly surviving impact structure from Archaean times, and oddly it became suspected in one of the most structurally complex areas on Earth; the Akia Terrane of West Greenland. Aeromagnetic surveys hint at two concentric, circular anomalies centred on a 3.0 billion years-old zone of grey gneisses (see figure) defining a cryptic structure. It is is surrounded by hugely deformed bodies of ultramafic and mafic rocks (black) and nickel mineralisation (red). In 2012 the whole complex was suggested to be a relic of a major impact of that age, the ultramafic-mafic bodied being ascribed to high degrees of impact-induced melting of the underlying mantle. The original proposers backed up their suggestion with several associated geological observations, the most crucial being supposed evidence for shock-deformation of mineral grains and anomalous concentrations of platinum-group metals (PGM).

A multinational team of geoscientists have subjected the area to detailed field surveys, radiometric dating, oxygen-isotope analysis and electron microscopy of mineral grains to test this hypothesis (Yakymchuck, C. and 8 others 2020. Stirred not shaken; critical evaluation of a proposed Archean meteorite impact in West Greenland. Earth and Planetary Science Letters, v. 557, article 116730 (advance online publication); DOI: 10.1016/j.epsl.2020.116730). Tectonic fabrics in the mafic and ultramafic rocks are clearly older than the 3.0 Ga gneisses at the centre of the structure. Electron microscopy of ~5500 zircon grains show not a single example of parallel twinning associated with intense shock. Oxygen isotopes in 30 zircon grains fail to confirm the original proposers’ claims that the whole area has undergone hydrothermal metamorphism as a result of an impact. All that remains of the original suggestion are the nickel deposits that do contain high PGM concentrations; not an uncommon feature of Ni mineralisation associated with mafic-ultramafic intrusions, indeed much of the world’s supply of platinoid metals is mined from such bodies. Even if there had been an impact in the area, three phases of later ductile deformation that account for the bizarre shapes of these igneous bodies would render it impossible to detect convincingly.

The new study convincingly refutes the original impact proposal. The title of Yakymchuck et al.’s paper aptly uses Ian Fleming’s recipe for James Bond’s tipple of choice; multiple deformation of the deep crust does indeed stir it by ductile processes, while an impact is definitely just a big shake. For the southern part of the complex (Toqqusap Nunaa), tectonic stirring was amply demonstrated in 1957 by Asger Berthelsen of the Greenland Geological Survey (Berthelsen, A. 1957. The structural evolution of an ultra- and polymetamorphic gneiss-complex, West Greenland. Geologische Rundschau, v. 46, p. 173-185; DOI: 10.1007/BF01802892). Coming across his paper in the early 60s I was astonished by the complexity that Berthelsen had discovered, which convinced me to emulate his work on the Lewisian Gneiss Complex of the Inner Hebrides, Scotland. I was unable to match his efforts. The Akia Terrane has probably the most complicated geology anywhere on our planet; the original proposers of an impact there should have known better …

Tsunami risk in East Africa

The 26 December 2004 Indian Ocean tsunami was one of the deadliest natural disasters since the start of the 20th century, with an estimated death toll of around 230 thousand. Millions more were deeply traumatised, bereft of homes and possessions, rendered short of food and clean water, and threatened by disease. Together with that launched onto the seaboard of eastern Japan by the Sendai earthquake of 11 March 2011, it has spurred research into detecting the signs of older tsunamis left in coastal sedimentary deposits (see for instance: Doggerland and the Storegga tsunami, December 2020). In normally quiet coastal areas these tsunamites commonly take the form of sand sheets interbedded with terrestrial sediments, such as peaty soils. On shores fully exposed to the ocean the evidence may take the form of jumbles of large boulders that could not have been moved by even the worst storm waves.

Most of the deaths and damage wrought by the 2004 tsunami were along coasts bordering the Bay of Bengal in Indonesia, Thailand, Myanmar, India and Sri Lanka, and the Nicobar Islands. Tsunami waves were recorded on the coastlines of Somalia, Kenya and Tanzania, but had far lower amplitudes and energy so that fatalities – several hundred – were restricted to coastal Somalia. East Africa was protected to a large extent by the Indian subcontinent taking much of the wave energy released by the magnitude 9.1 to 9.3 earthquake (the third largest recorded) beneath Aceh at the northernmost tip of the Indonesian island of Sumatra. Yet the subduction zone that failed there extends far to the southeast along the Sunda Arc. Earthquakes further along that active island arc might potentially expose parts of East Africa to far higher wave energy, because of less protection by intervening land masses.

This possibility, together with the lack of any estimate of tsunami risk for East Africa, drew a multinational team of geoscientists to the estuary of the Pangani River in Tanzania (Maselli, V. and 12 others 2020. A 1000-yr-old tsunami in the Indian Ocean points to greater risk for East Africa. Geology, v. 48, p. 808-813; DOI: 10.1130/G47257.1). Archaeologists had previously examined excavations for fish farming ponds and discovered the relics of an ancient coastal village. Digging further pits revealed a tell-tale sheet of sand in a sequence of alluvial sediments and peaty silts and fine sands derived from mangrove swamps. The peats contained archaeological remains – sherds of pottery and even beads. The tsunamite sand sheet occurs within the mangrove facies. It contains pebbles of bedrock that also litter the open shoreline of this part of Tanzania. There are also fossils; mainly a mix of marine molluscs and foraminifera with terrestrial rodents fish, birds and amphibians. But throughout the sheet, scattered at random, are human skeletons and disarticulated bones of male and female adults, and children. Many have broken limb bones, but show no signs of blunt-force trauma or disease pathology. Moreover, there is no sign of ritual burial or weaponry; the corpses had not resulted from massacre or epidemic. The most likely conclusion is that they are victims of an earlier Indian Ocean tsunami. Radiocarbon dating shows that it occurred at some time between the 11th and 13th centuries CE. This tallies with evidence from Thailand, Sumatra, the Andaman and Maldive Islands, India and Sri Lanka for a major tsunami in 950 CE.

Computer modelling of tsunami propagation reveals that the Pangani River lies on a stretch of the Tanzanian coast that is likely to have been sheltered from most Indian Ocean tsunamis by Madagascar and the shallows around the Seychelles Archipelago. Seismic events on the Sunda Arc or the lesser, Makran subduction zone of eastern Iran may not have been capable of generating sufficient energy to raise tsunami waves at the latitudes of the Tanzanian coast much higher than those witnessed there in 2004, unless their arrival coincided with high tide – damage was prevented in 2004 because of low tide levels. However, the topography of the Pangani estuary may well amplify water level by constricting a surge. Such a mechanism can account for variations of destruction during the 2011 Tohoku-Sendai tsunami in NE Japan.

If coastal Tanzania is at high risk of tsunamis, that can only be confirmed by deeper excavation into coastal sediments to check for multiple sand sheets that characterise areas closer to the Sunda Arc. So far, that in the Pangani estuary is the only one recorded in East Africa

Weak lithosphere delayed the formation of continents

There are very few tangible signs that the Earth had continents at the surface before about 4 billion years (Ga) ago. The most cited evidence that they may have existed in the Hadean Eon are zircon grains with radiometric ages up to 4.4 Ga that were recovered from much younger sedimentary rocks in Western Australia. These tiny grains also show isotopic anomalies that support the existence of continental material, i.e. rocks of broadly granitic composition, only 100 Ma after the Earth formed (see: Zircons and early continents no longer to be sneezed at; February 2006). So, how come relics of such early continents have yet to be discovered in the geological record? After all granitic rocks – in the broad sense – which form continents are so less dense than the mantle that modern subduction is incapable of recycling them en masse. Indeed, mantle convection of any type in the hotter Earth of the Hadean seems unlikely to have swallowed continents once they had formed. Perhaps they are hiding in another guise among younger rocks of the continental crust. But, believe me; geologists have been hunting for them, to no avail, in every scrap of existing continental crust since 1971 when gneisses found in West Greenland by New Zealander Vic McGregor turned out to be almost 3.8 Ga old. This set off a grail-quest, which still continues, to negate James Hutton’s ‘No vestige of a beginning …’ concept of geological time.

There is another view. Early continental lithosphere may have returned to the mantle piece by piece by other means. One that has been happening since the Archaean is as debris from surface erosion and its transportation to the ocean floor, thence to be subducted along with denser material of the oceanic lithosphere. Another possibility is that before 4 Ga continental lithosphere had far less strength than characterised it in later times; it may have been continually torn into fragments small enough for viscous drag to defy buoyancy and consign them into the mantle by convective processes. Two things seem to confer strength on continental lithosphere younger than 4 billion years: its depleted surface heat flow and heat-production that stem from low concentrations of radioactive isotopes of uranium, thorium and potassium in the lower crust and sub-continental mantle; bolstering by cratons that form the cores of all major continents. Three geoscientists at Monash University in Victoria, Australia have examined how parts of early convecting mantle may have undergone chemical and thermal differentiation (Capitanio, F.A. et al. 2020. Thermochemical lithosphere differentiation and the origin of cratonic mantle. Nature, v. 588, p. 89-94; DOI: 10.1038/s41586-020-2976-3). These processes are an inevitable outcome of the tendency for mantle melting to begin as it becomes decompressed when pressure decreases when it rises during convection. Continual removal of the magmas produced in this way would remove not only much of the residue’s heat-producing capacity – U, Th and K preferentially enter silicate melts – but also its content of volatiles, especially water. Even if granitic magmas were completely recycled back to the mantle by the greater vigour of the hot, early Earth, at least some of the residue of partial melting would remain. Its dehydration would increase its viscosity (strength). Over time this would build what eventually became the highly viscous thick mantle roots (tectosphere) on which increasing amounts of the granitic magmas could stabilise to establish the oldest cratons. Over time more and more such cratonised crust would accumulate, becoming increasingly unlikely to be resorbed into the mantle. Although cratons are not zoned in terms of the age of their constituent rocks, they do jumble together several billion years’ worth of continental crust in what used to be called ‘the Basement Complex’.

Early in this process, heat would have made much of the lithosphere too weak to form rigid plates and the tectonics with which geologists are so familiar from the later parts of Earth’s history. The evolution that Capitanio et al. propose suggests that the earliest rigid plates were capped by Archaean continental crust. That implies subduction of oceanic lithosphere starting at their margins, with intra-oceanic destructive plate margins and island arcs being a later feature of tectonics. It is in the later, Proterozoic Eon that evidence for accretion of arc terranes becomes obvious, plastering their magmatic products onto cratons, further enlarging the continents.

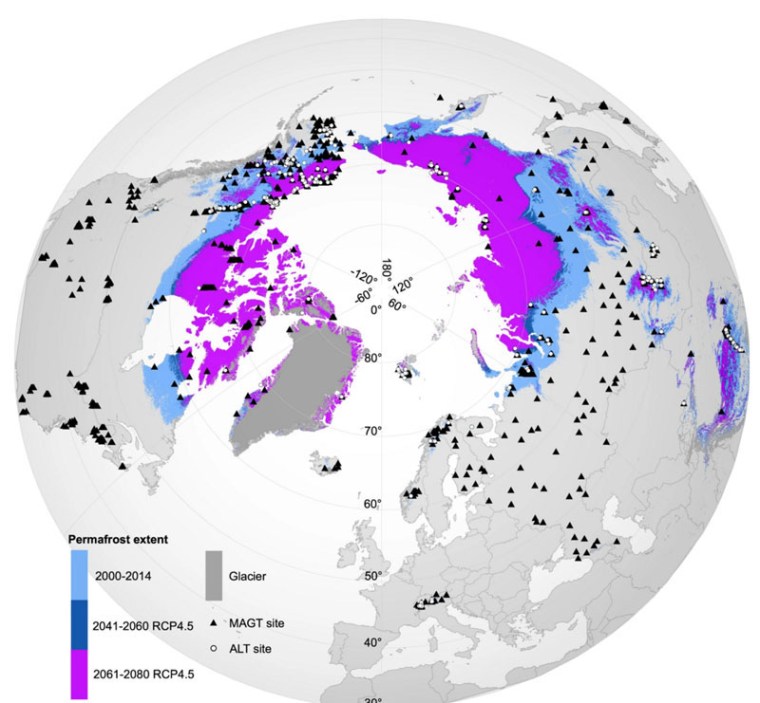

Thawing permafrost, release of carbon and the role of iron

Global warming is clearly happening. The crucial question is ‘How bad can it get?’ Most pundits focus on the capacity of the globalised economy to cut carbon emissions – mainly CO2 from fossil fuel burning and methane emissions by commercial livestock herds. Can they be reduced in time to reverse the increase in global mean surface temperature that has already taken place and those that lie ahead? Every now and then there is mention of the importance of natural means of drawing down greenhouse gases: plant more trees; preserve and encourage wetlands and their accumulation of peat and so on. For several months of the Northern Hemisphere summer the planet’s largest bogs actively sequester carbon in the form of dead vegetation. For the rest of the year they are frozen stiff. Muskeg and tundra form a band across the alluvial plains of great rivers that drain North America and Eurasia towards the Arctic Ocean. The seasonal bogs lie above sediments deposited in earlier river basins and swamps that have remained permanently frozen since the last glacial period. Such permafrost begins at just a few metres below the surface at high latitudes down to as much as a kilometre, becoming deeper, thinner and more patchy until it disappears south of about 60°N except in mountainous areas. Permafrost is melting relentlessly, sometimes with spectacular results broadly known as thermokarst that involves surface collapse, mudslides and erosion by summer meltwater.

Permafrost is a good preserver of organic material, as shown by the almost perfect remains of mammoths and other animals that have been found where rivers have eroded their frozen banks. The latest spectacular find is a mummified wolf pup unearthed by a gold prospector from 57 ka-old permafrost in the Yukon, Canada. She was probably buried when a wolf den collapsed. Thawing exposes buried carbonaceous material to processes that release CO2, as does the drying-out of peat in more temperate climes. It has long been known that the vast reserves of carbon preserved in frozen ground and in gas hydrate in sea-floor sediments present an immense danger of accelerated greenhouse conditions should permafrost thaw quickly and deep seawater heats up; the first is certainly starting to happen in boreal North America and Eurasia. Research into Arctic soils had suggested that there is a potential mitigating factor. Iron-3 oxides and hydroxides, the colorants of soils that overlie permafrost, have chemical properties that allow them to trap carbon, in much the same way that they trap arsenic by adsorption on the surface of their molecular structure (see: Screening for arsenic contamination, September 2008).

But, as in the case of arsenic, mineralogical trapping of carbon and its protection from oxidation to CO2 can be thwarted by bacterial action (Patzner, M.S. and 10 others 2020. Iron mineral dissolution releases iron and associated organic carbon during permafrost thaw. Nature Communications, v. 11, article 6329; DOI: 10.1038/s41467-020-20102-6). Monique Patzner of the University of Tuebingen, Germany, and her colleagues from Germany, Denmark, the UK and the US have studied peaty soils overlying permafrost in Sweden that occurs north of the Arctic Circle. Their mineralogical and biological findings came from cores driven through the different layers above deep permafrost. In the layer immediately above permanently frozen ground the binding of carbon to iron-3 minerals certainly does occur. However, at higher levels that show evidence of longer periods of thawing there is an increase of reduced iron-2 dissolved in the soil water along with more dissolved organic carbon – i.e. carbon prone to oxidation to carbon dioxide. Also, biogenic methane – a more powerful greenhouse gas – increases in the more waterlogged upper sediments. Among the active bacteria are varieties whose metabolism involves the reduction of insoluble iron in ferric oxyhdroxide minerals to the soluble ferrous form (iron-2). As in the case of arsenic contamination of groundwater, the adsorbed contents of iron oxyhydroxides are being released as a result of powerful reducing conditions.

Applying their results to the entire permafrost inventory at high northern latitudes, the team predicts a worrying scenario. Initial thawing can indeed lock-in up to tens of billion tonnes of carbon once preserved in permafrost, yet this amounts to only a fifth of the carbon present in the surface-to-permafrost layer of thawing, at best. In itself, the trapped carbon is equivalent to between 2 to 5 times the annual anthropogenic release of carbon by burning fossil fuels. Nevertheless, it is destined by reductive dissolution of its host minerals to be emitted eventually, if thawing continues. This adds to the even vaster potential releases of greenhouse gases in the form of biogenic methane from waterlogged ground. However, there is some evidence to the contrary. During the deglaciation between 15 to 8 thousand years ago – except for the thousand years of the Younger Dryas cold episode – land-surface temperatures rose far more rapidly than happening at present. A study of carbon isotopes in air trapped as bubbles in Antarctic ice suggests that methane emissions from organic carbon exposed to bacterial action by thawing permafrost were much lower than claimed by Patzner et al. for present-day, slower thawing (see: Old carbon reservoirs unlikely to cause massive greenhouse gas release, study finds. Science Daily, 20 February 2020) – as were those released by breakdown of submarine gas hydrates.

Origin of life: some news

For self-replicating cells to form there are two essential precursors: water and simple compounds based on the elements carbon, hydrogen, oxygen and nitrogen (CHON). Hydrogen is not a problem, being by far the most abundant element in the universe. Carbon, oxygen and nitrogen form in the cores of stars through nuclear fusion of hydrogen and helium. These elemental building blocks need to be delivered through supernova explosions, ultimately to where water can exist in liquid form to undergo reactions that culminate in living cells. That is only possible on solid bodies that lie at just the right distance from a star to support average surface temperatures that are between the freezing and boiling points of water. Most important is that such a planet in the ‘Goldilocks Zone’ has sufficient mass for its gravity to retain water. Surface water evaporates to some extent to contribute vapour to the atmosphere. Exposed to ultraviolet radiation H2O vapour dissociates into molecular hydrogen and water, which can be lost to space if a planet’s escape velocity is less than the thermal vibration of such gas molecules. Such photo-dissociation and diffusion into outer space may have caused Mars to lose more hydrogen in this way than oxygen, to leave its surface dry but rich in reddish iron oxides.

Despite liquid water being essential for the origin of planetary life it is a mixed blessing for key molecules that support biology. This ‘water paradox’ stems from water molecules attacking and breaking the chemical connections that string together the complex chains of proteins and nucleic acids (RNA and DNA). Living cells resolve the paradox by limiting the circulation of liquid water within them by being largely filled with a gel that holds the key molecules together, rather than being bags of water as has been commonly imagined. That notion stemmed from the idea of a ‘primordial soup’, popularised by Darwin and his early followers, which is now preserved in cells’ cytoplasm. That is now known to be wrong and, in any case, the chemistry simply would not work, either in a ‘warm, little pond’ or close to a deep sea hydrothermal vent, because the molecular chains would be broken as soon as they formed. Modern evolutionary biochemists suggest that much of the chemistry leading to living cells must have taken place in environments that were sometimes dry and sometimes wet; ephemeral puddles on land. Science journalist Michael Marshall has just published an easily read, open-source essay on this vexing yet vital issue in Nature (Marshall, M. 2020. The Water Paradox and the Origins of Life. Nature, v. 588, p. 210-213; DOI: 10.1038/d41586-020-03461-4). If you are interested, click on the link to read Marshall’s account of current origins-of-life research into the role of endlessly repeated wet-dry cycles on the early Earth’s surface. Fascinating reading as the experiments take the matter far beyond the spontaneous formation of the amino acid glycine found by Stanley Miller when he passed sparks through methane, ammonia and hydrogen in his famous 1953 experiment at the University of Chicago. Marshall was spurred to write in advance of NASA’s Perseverance Mission landing on Mars in February 2021. The Perseverance rover aims to test the new hypotheses in a series of lake sediments that appear to have been deposited by wet-dry cycles in a small Martian impact crater (Jezero Crater) early in the planet’s history when surface water was present.

That CHON and simple compounds made from them are aplenty in interstellar gas and dust clouds has been known since the development of means of analysing the light spectra from them. The organic chemistry of carbonaceous meteorites is also well known; they even smell of hydrocarbons. Accretion of these primitive materials during planet formation is fine as far as providing feedstock for life-forming processes on physically suitable planets. But how did CHON get from giant molecular clouds into such planetesimals. An odd-sounding organic compound – hexamethylenetetramine ((CH2)6N4), or HMT – formed industrially by combining formaldehyde (CH2O) and ammonia (NH3) – was initially synthesised in the late 19th century as an antiseptic to tackle UTIs and is now used as a solid fuel for lightweight camping stoves, as well as much else besides. HMT has a potentially interesting role to play in the origin of life. Experiments aimed at investigating what happens when starlight and thermal radiation pervade interstellar gas clouds to interact with simple CHON molecules, such as ammonia, formaldehyde, methanol and water, yielded up to 60% by mass of HMT.

The structure of HMT is a sort of cage, so that crystals form large fluffy aggregates, instead of the gases from which it can be formed in deep space. Together with interstellar silicate dusts, such sail-like structures could accrete into planetesimals in nebular star nurseries under the influence of gravity and light pressure. Geochemists from several Japanese institutions and NASA have, for the first time, found HMT in three carbonaceous chondrites, albeit at very low concentrations – parts per billion (Y. Oba et al. 2020. Extraterrestrial hexamethylenetetramine in meteorites — a precursor of prebiotic chemistry in the inner Solar System. Nature Communications, v. 11, article 6243; DOI: 10.1038/s41467-020-20038-x). Once concentrated in planetesimals – the parents of meteorites when they are smashed by collisions – HMT can perform the useful chemical ‘trick’ of breaking down once again to very simple CHON compounds when warmed. At close quarters such organic precursors can engage in polymerising reactions whose end products could be the far more complex sugars and amino acid chains that are the characteristic CHON compounds of carbonaceous chondrites. Yasuhiro Oba and colleagues may have found the missing link between interstellar space, planet formation and the synthesis of life through the mechanisms that resolve the ‘water paradox’ outlined by Michael Marshall.

See also: Scientists Find Precursor of Prebiotic Chemistry in Three Meteorites (Sci-news, 8 December 2020.)

How like the Neanderthals are we?

In the most basic, genetic sense, we were sufficiently alike for us to have interbred with them regularly and possibly wherever the two human groups met. As a result the genomes of all modern humans contain snips derived from Neanderthals (see: Everyone now has their Inner Neanderthal; February 2020). East Asian people also carry some Denisovan genes as do the original people of Australasia and the first Americans. Those very facts suggest that members of each group did not find individuals from others especially repellent as potential sexual partners! But that covers only a tiny part of what constitutes culture. There is archaeological evidence that Neanderthals and modern humans made similar tools. Both had the skills to make bi-faced ‘hand axes’ before they even met around 45 to 40 ka ago. A cave (La Grotte des Fées) near Châtelperron to the west of the French Alps that was occupied by Neanderthals until about 40 ka yielded a selection of stone tools, including blades, known as the Châtelperronian culture, which indicates a major breakthrough in technology by their makers. It is sufficiently similar to the stone industry of anatomically modern humans (AMH) who, around that time, first migrated into Europe from the east (Aurignacian) to pose a conundrum: Did the Neanderthals copy Aurignacian techniques when they met AMH, or vice versa? Making blades by splitting large flint cores is achieved by striking the cores with just a couple of blows with a softer tool. At the very least Neanderthals had the intellectual capacity to learn this very difficult skill, but they may have invented it (see: Disputes in the cavern; June 2012). Then there is growing evidence for artistic abilities among Neanderthals, and even Homo erectus gets a look-in (see: Sophisticated Neanderthal art now established; February 2018).

For a long time, a pervasive aspect of AMH culture has been ritual. Indeed much early art may be have been bound up with ritualistic social practices, as it has been in historic times. A persuasive hint at Neanderthal ritual lies in the peculiar structures – dated at 177 ka – found far from the light of day in the Bruniquel Cave in south-western France (see: Breaking news: Cave structures made by Neanderthals; May 2016). They comprise circles fashioned from broken-off stalactites, and fires seem to have been lit in them. The most enduring rituals among anatomically modern humans have been those surrounding death: we bury our dead, thereby preserving them, in a variety of ways and ‘send them off’ with grave goods or even by burning them and putting the ashes in a pot. A Neanderthal skeleton (dated at 50 ka) found in a cave at La Chappelle-aux-Saints appears to have been buried and made safe from scavengers and erosion. There are even older Neanderthal graves (90 to 100 ka) at Quafzeh in Palestine and Shanidar in Iraq, where numerous individuals, including a mother and child, had been interred. Some are associated with possible grave goods, such as pieces of red ochre (hematite) pigment, animal body parts and even pollen that suggests flowers had been scattered on the remains. The possibility of deliberate offerings or tributes and even the notion of burial have met with scepticism among some palaeoanthropologists. One reason for the scientific caution is that many of the finds were excavated long before the rigour of modern archaeological protocols

Recently a multidisciplinary team involving scientists from France, Belgium, Italy, Germany, Spain and Denmark exhaustively analysed the context and remains of a Neanderthal child found in the La Ferrassie cave (Dordogne region of France) in the early 1970s (Balzeau, A. and 13 others 2020. Pluridisciplinary evidence for burial for the La Ferrassie 8 Neandertal child. Scientific Reports, v. 10, article 21230; DOI: 10.1038/s41598-020-77611-z). Estimated to have been about 2 years old, the child is anatomically complete. Bones of other animals found in the same deposit were less-well preserved than those of the child, adding weight to the hypothesis that a body, rather than bones, had been buried soon after death. Luminescence dating of the sediments enveloping the skeleton is considerably older than the radiocarbon age of one of the child’s bones. That is difficult to explain other than by deliberate burial. It is almost certain that a pit had been dug and the child placed in it, to be covered in sediment. The skeleton was oriented E-W, with the head towards the east. Remarkably, other Neanderthal remains at the La Ferrassie site also have heads to the east of the rest of their bones, suggesting perhaps a common practice of orientation relative to sunrise and sunset.

It is slowly dawning on palaeoanthropologists that Neanderthal culture and cognitive capacity were not greatly different from those of anatomically modern humans. That similar beings to ourselves disappeared from the archaeological record within a few thousand years of the first appearance of AMH in Europe has long been attributed to what can be summarised as the Neanderthals being ‘second best’ in many ways. That may not have been the case. Since the last glaciation something similar has happened twice in Europe, which analysis of ancient DNA has documented in far more detail than the disappearance of the Neanderthals. Mesolithic hunter-gatherers were followed by early Neolithic farmers with genetic affinities to living people in Northern Anatolia in Turkey – the region where growing crops began. The DNA record from human remains with Neolithic ages shows no sign of genomes with a clear Mesolithic signature, yet some of the genetic features of these hunter-gatherers still remain in the genomes of modern Europeans. Similarly, ancient DNA recovered from Bronze Age human bones suggests almost complete replacement of the Neolithic inhabitants by people who introduced metallurgy, a horse-centred culture and a new kind of ceramic – the Bell Beaker. This genetic group is known as the Yamnaya, whose origins lie in the steppe of modern Ukraine and European Russia. In this Neolithic-Bronze Age population transition the earlier genomes disappear from the ancient DNA record. Yet Europeans still carry traces of that earlier genetic heritage. The explanation now accepted by both geneticists and archaeologists is that both events involved assimilation and merging through interbreeding. That seems just as applicable to the ‘disappearance’ of the Neanderthals

See also: Neanderthals buried their dead: New evidence (Science Daily, 9 December 2020)