Almost all devastating earthquakes within living memory and the tsunamis that ensued from some of them have occurred where tectonic plates meet and move past one another either horizontally through strike-slip motion or vertically as a result of subduction. This link between real events and the central theory of global dynamics gives an impression of inherent predictability about where damaging and deadly earthquakes might happen, if not the more useful matter of when the lithosphere might rupture. Such confidence is potentially highly dangerous: the most deadly earthquake in recorded history killed at least 800 thousand people in China’s Shanxi Province in 1556 when according to a description written shortly afterwards, ‘… various misfortunes took place… In some places, the ground suddenly rose up and formed new hills, or it sank abruptly and became new valleys. In other areas, a stream burst out in an instant, or the ground broke and new gullies appeared…’. Shanxi is far from any plate boundary. A study of Chinese historic records covering the last two millennia (Liu, M. et al. 2011. 2000 years of migrating earthquakes in North China: How earthquakes in midcontinents differ from those at plate boundaries. Lithosphere, v. 3, p. 128-132) shows a pattern to the position of large intraplate events. Rather than occurring along lines as do those at plate boundaries, earthquakes ‘hopped’ from place to place without affecting the same areas twice. Liu and colleagues consider this almost random pattern to result from reactivation of interlinked faults through broad-scale and gradual tectonic loading of the crust by far off plate movements. After a short period of reactivation one fault locks so that energy build-up is eventually released by another in the plexus of crustal weaknesses.

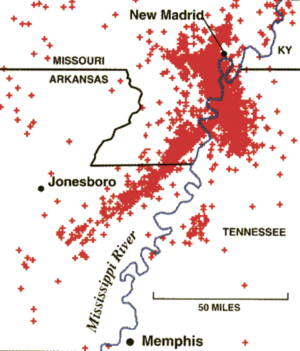

The best studied site of such intraplate seismicity lies midway along the Mississippi valley in the mid-US, between St Louis and Memphis. In 1811 and 1812 four Magnitude 7 to 8 earthquakes struck, the most affected place being the small township of New Madrid on the banks of the great river where mud and sand spouted from numerous sediment volcanoes. No-one died there but tremors were felt over a million square kilometers, bells ringing spontaneously as far away as Boston and Toronto. It is now known that this section of the Mississippi basin lies above a graben that affects the ancient basement beneath the alluvial sediments, one of whose faults was reactivated, perhaps in an analogous way to the hypothesis about Chinese seismicity. A coauthor in Liu et al. (2011), Seth Stein of Northwestern University, Illinois, believes stress redistribution through a Mid-western fault network was responsible and other events are likely at some uncertain time in the future on this and other areas underpinned by ancient fault complexes. Indeed sporadic ‘quakes up to Magnitude 7 have affected the eastern US and Canada and the Atlantic seaboard since European settlement. But since the largest of the New Madrid quartet of earthquakes, populations have grown across the likely areas of tenuous risk and future ones could have extremely serious consequences for which it is difficult to plan by virtue of unpredictability of both place and timing: in some respects a more worrying prospect than is the case where major events are inevitable – sometime – as along the San Andreas Fault. There are few, if any, major conurbations worldwide that could be considered seismically safe if the theory of networked stress redistribution through otherwise inert parts of continental crust is borne out.

In some respects the theory is a small-scale version of the suggested mechanical linkage through all major plate boundaries that has been suggested by some to account for the clustering in time of great earthquakes – around and above Magnitude 8 – around the globe. Since 2000 great earthquakes have occurred on subduction zones beneath Sumatra, the Himalaya, the Andes, Central America, Alaska, New Guinea, the mid-Pacific, Japan and the Kurile islands, on the strike-slip system that cuts New Zealand and in the intraplate setting of the 2008 Sichuan earthquake in China. Almost all plate boundaries link up globally, but although it seems likely that stress is redistributed along boundaries, especially between adjacent segments, as documented for the great Anatolian fault system of Turkey and the Indonesian subduction zone, a mechanism that transmits stress beyond individual plates seems unlikely.

Related articles

- Scientists find rift between New Mexico and Colorado geologically active and capable of generating quakes (theextinctionprotocol.wordpress.com)

- Quake Update : Pacific shakes are ‘not linked'[ (environmentaleducationuk.wordpress.com)

- Jabr, F. 2012. Quake escape. New Scientist, v. 213 (14 January 2012

- Mid-continent earthquakes: warnings or memories EPN 1 January 2010